Algorithm complexity, do you remember what is that? Do you know, what is it?

So, I think after one decade without reading nothing about Time Complexity, Space Complexity and many other things; your algorithm skills may be a little bit “rusty”. I know about me, it happens with me, maybe it is your case as well.

I know that many people reading this article will say that algorithm complexity and other things are worthless to most IT companies. What really makes sense is to knowing several tools and two or more programming languages.

I dare say to you, do not believe it. It is very important to know several tools and many programming languages. However, the most important is to write a source code with the minimum expected about performance and properly manage memory usage. Behind of any good tool, has a well-written code.

To help myself, I am going to start to remind all the concepts and get my hands dirty with known algorithms. I will share my steps with you, and I invite you to participate in this process with me.

Today we will learn about what is Algorithm Complexity, let’s start.

At a high level, we can say that “Algorithm complexity is concerned about how fast or slow particular algorithm performs”. Of course, the final result for users depends on many things, like processor speed; instruction set, disk speed, a brand of the compiler, etc.

The Algorithm Complexity is a numerical function T(n) — time versus the input size n. So, if we use only the logic exposed above, the performance will be different on different devices/computers. How do we fix it? To fix it, we will measure time T(n) as the number of elementary “steps”. It means we will estimate the algorithm asymptotically.

When we write an algorithm, and we want to know if it is more or less, performative we should use asymptotic notation. Let us represent the time function T(n) using the “big-O” notation to express a runtime complexity of the algorithm.

Big O notation is a mathematical notation that describes the limiting behaviour of a function when the argument tends towards a particular value or infinity.

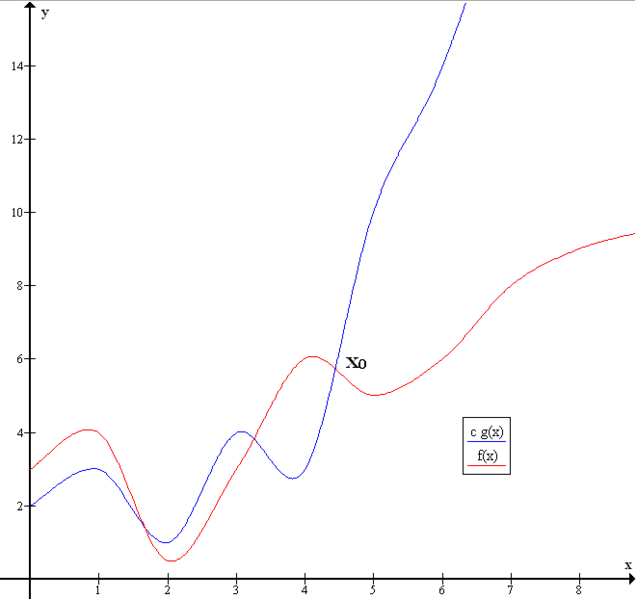

Below we have two functions “g(x)” and “f(x)”, the “y” axis the is time, and “x” axis is the number of inputs. We can see that the function “f(x)” spend less time executing more number of inputs.

Example of Big O notation: f(x) ∈ O(g(x))

There are many notations of time complexity known, the most common are:

Linear time O(n): time execution is directly proportional to the input size.

Logarithmic Time O(log n): time execution is proportional to the logarithm of the input size.

Constant Time O(1): time execution requires the same amount of time regardless of the input size.

Quadratic Time O(n2): run in logarithm time if its time execution is proportional to the square of the input size.

I will not give you examples here, because we will implement algorithms and apply “Algorithm Analysis”. Do not worry if you still do not understand these concepts. When we implement the algorithms, we will use these concepts.

The next article, we will talk about Time Complexity and Space Complexity. See you soon.

Cleison Ferreira de Melo

Estía Training Instructor

Source:

https://www.cs.cmu.edu/~adamchik/15-121/lectures/Algorithmic%20Complexity/complexity.html

https://en.wikipedia.org/wiki/Computational_complexity#Asymptotic_complexity